GenAI's Creative Destruction Will Force Us to Measure What Matters

James Gimbi

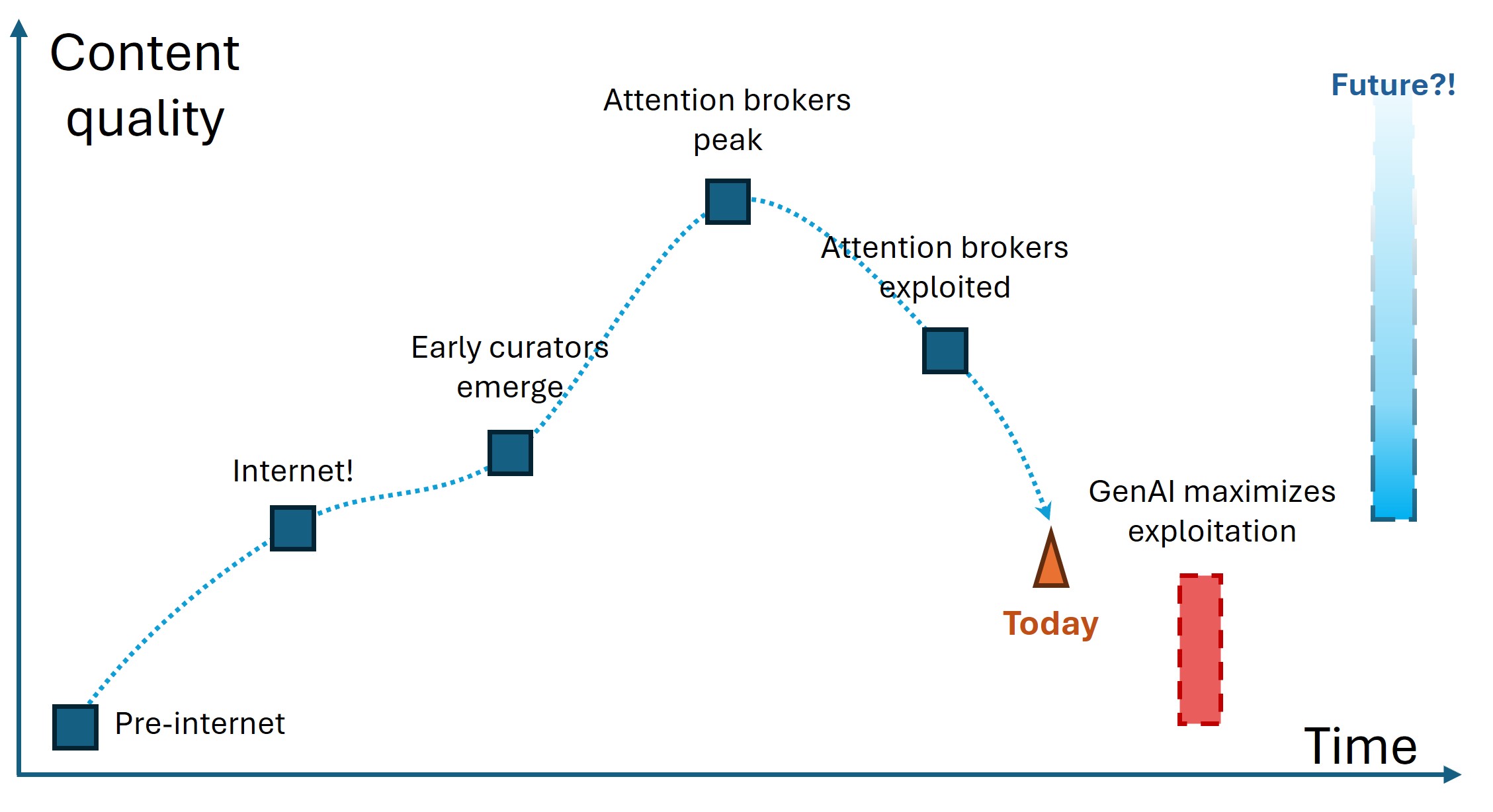

Takeaway: I think generative AI will first worsen, but then resolve, enshitification1 of digital content. This is driven by accelerated exploitation of already decaying quality signals (here focusing on volume), before helping us pivot toward higher-fidelity signals.

Measuring “close enough”

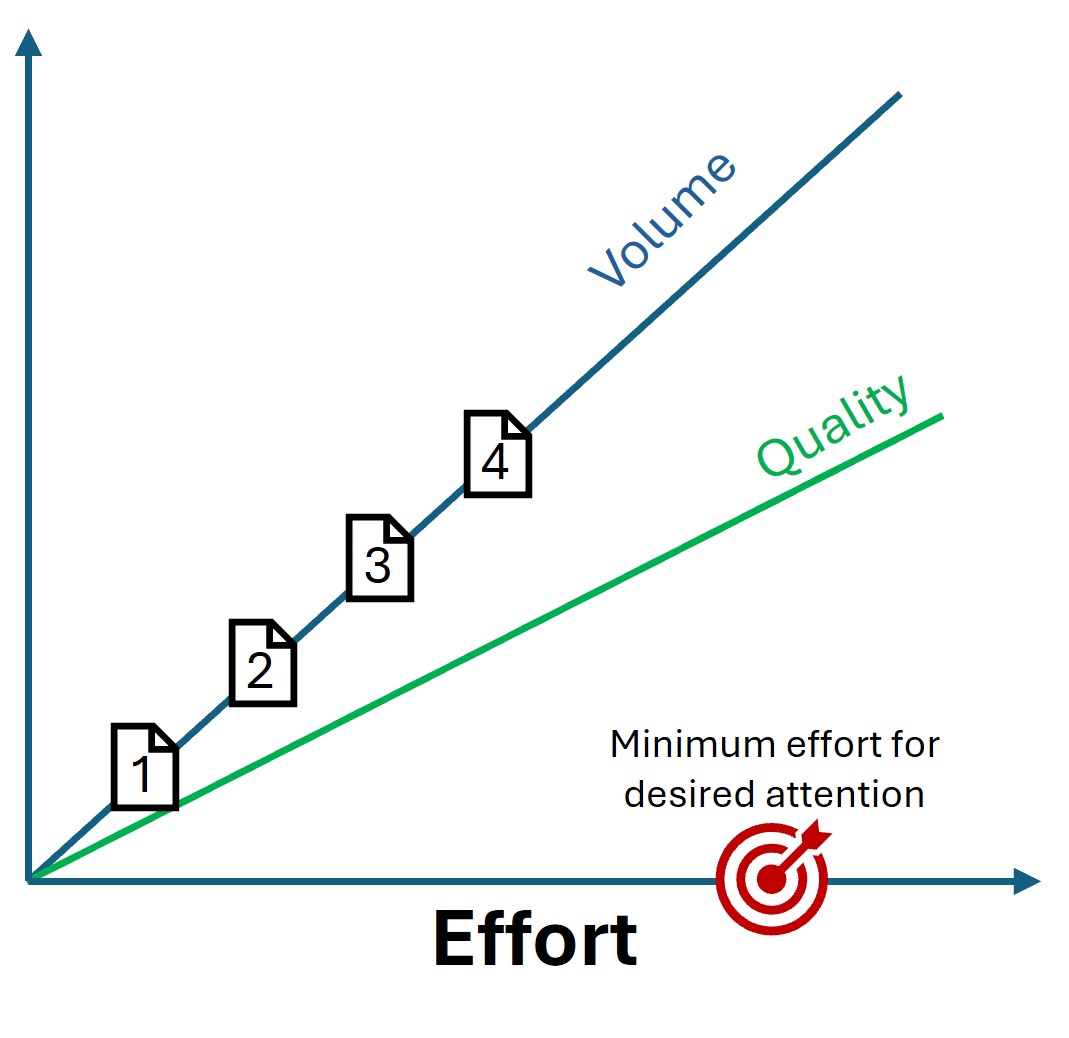

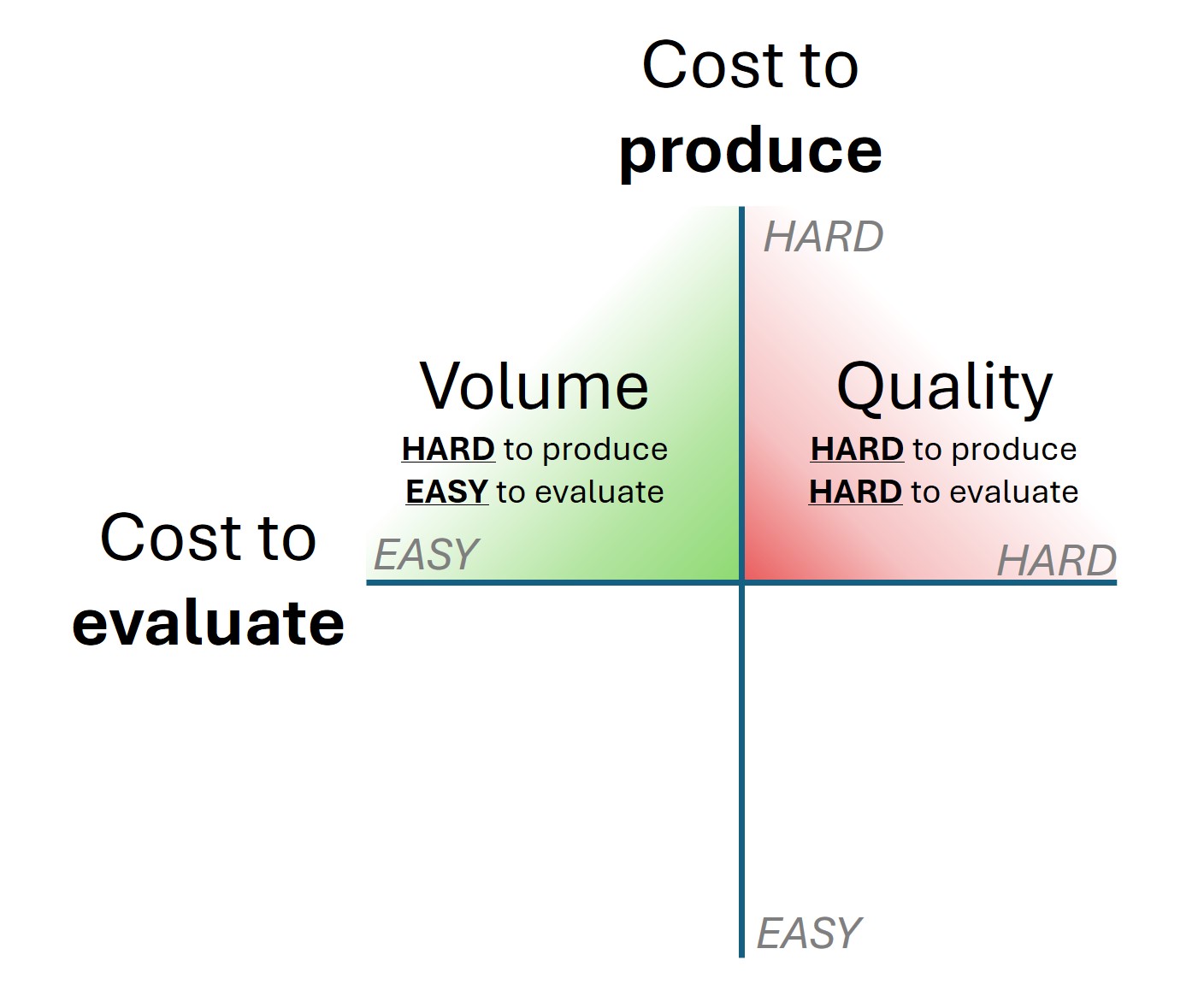

The mediocre state of media didn’t emerge overnight - it developed through a series of market responses to how we measure and reward content value. Consider content volume (e.g., word count, video length, frequency, etc.) and content quality (e.g., utility, novelty, aesthetics, etc.). Prior to GenAI, creators incurred costs to produce both volume and quality.

Importantly, the linear cost to produce volume signaled a baseline investment by the creator. For instance, the first page of a coherent essay had the same baseline cost as the second page, third page, and fourth page. Assuming no quality at all, a four-page essay will take four times the effort of a one-page essay; and who is going to bother publishing a longer essay without putting at least some effort into quality2?

The relationship between cost and volume does not have much value for individual consumers – you’re not likely to seek out the heaviest book, the longest movie, or the album with the most tracks.

But the cost-volume relationship has tremendous value for attention brokers like search engines, advertisers, and media markets. Because brokers cannot measure quality at scale, brokers rely on effort signals as a proxy for quality – signals like volume.

And so, attention brokers began to index based on volume3. And it worked! Volume signals effectively surfaced high-effort material, especially in the early days of the internet. Of course, we all know where this path leads. Quality is more costly than volume to produce and, in fact, poor quality can be hidden by high volume.

Naturally, actors exploited how attention brokers indexed volume. Content stuffing generates decent ROI and the attention arbitrage vulnerability, while closing, still exists. Volume production increasingly outpaced quality production, and this delta contributes to the gradual “enshitification” of the internet. Signal-to-noise ratios spiral as the volume-quality relationship decays, leaving users to separate wheat from an ever-growing mountain of chaff designed, not for them, but to game attention brokers4.

GenAI pumps up the volume

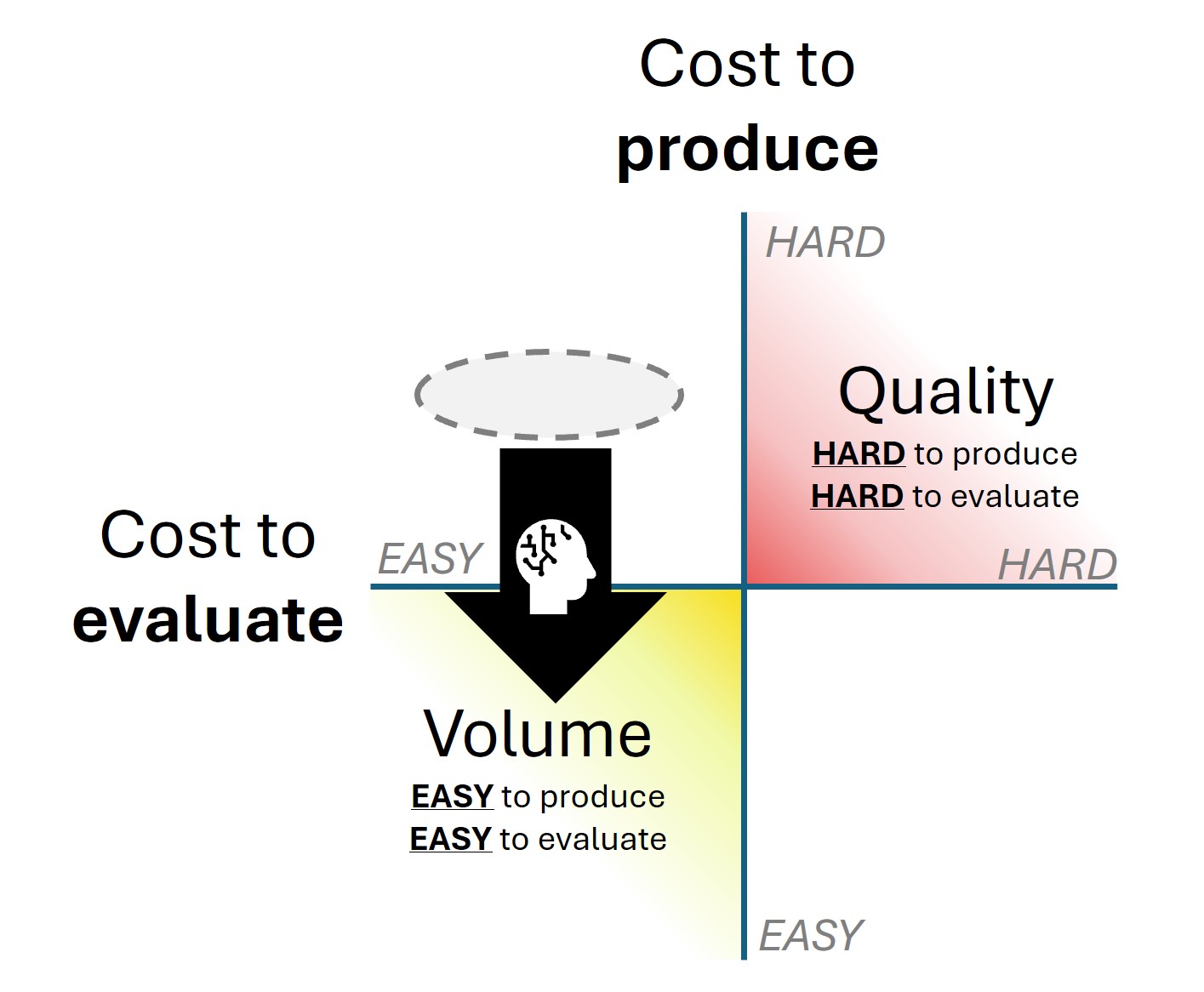

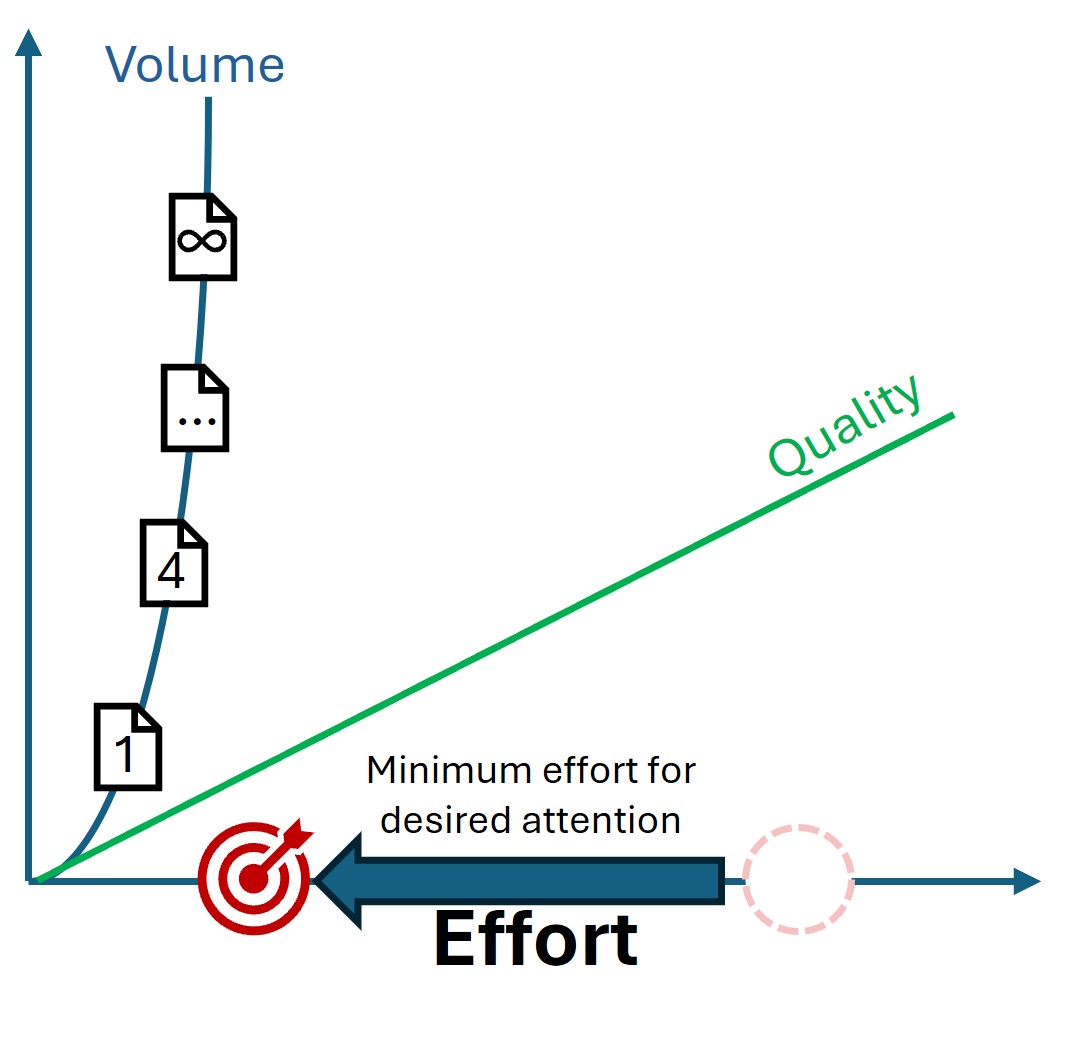

GenAI obliterated the cost to produce volumes of content. Attention brokers just lost their quality proxy.

The feared (and probably real) short-term result is a dramatically amplified disparity between volume production and quality production. This means that GenAI slashes the effort needed to game existing attention brokers. We already see GenAI accelerate enshitification in most corners of media, and there is no reason to expect that to slow down.

Measuring what matters

But then? Well, then I think that things will get better again. The commoditization of volume can only lead to two outcomes:

- Nothing changes: enshitification perpetuates and useful media ends.

- Something changes: creators, brokers, and consumers adapt to the obliteration of the cost/volume relationship.

I simply do not think the machine is likely to break down entirely, so I’m pretty sure we’re looking at Something changes. There is too much societal value to be had by usefully deploying attention, and the bottom falls out on enshitification without an audience accustomed to quality material.

I think the excessive deployment of low-quality content will (painfully but effectively) accelerate the death of floating proxy signals like volume, and I think we will pivot toward higher-fidelity signals. Perhaps:

- Reputation: Talk has become cheap, so value may shift to fidelity. This means heavier reliance on earned trust. Both trust in individual producers and trust attention brokers. I expect brokers to take more of a curator role than today’s media influencers if consumers demand strong diligence from attention brokers. We may also develop more accessible forms of peer review and endorsement.

- Efficiency: “If I had more time, I would have written a shorter letter.” We may demand that producers and brokers mitigate the consumption cost to their audience (i.e., clarity and brevity). Efficiency lowers the cost of consuming low-quality content and makes it harder for low-quality content to hide in the first place.

- Better effort signals: Some signals still have merit as proof of baseline effort, and that costly effort implies an attempt at quality. We may renew our interest in media delivered outside the domain of digital production. Live seminars and performances, handmade products, and demonstration that the creator is willing to invest serious time into their content.

- Sustained relevance: Respect for tenured or classic material and suspicion of very-new content.

- AI-enabled distillation and scoring: GenAI might provide novel solutions. Perhaps High-fidelity enrichment to evaluate content quality and trustworthiness, supported by replicable methods. Perhaps dynamic content delivery that strips noise and provides signal in a manner highly tuned to each consumer. This topic is wide open. Now we are deep into speculation-land. I’m no better at predicting the future than the average bear but I have an intuitive feeling about the shape of the path we’re on.

I believe GenAI-fueled enshitification will get worse for a while – and it might get pretty damn bad. But the forces at play are strong enough to pull us back to a better content ecosystem. At the very least, I think we will return to today’s watermark. Probably better. Possibly a whole lot better. If we can get momentum moving in the right direction again, there is no limit to where this can go.

-

https://en.wikipedia.org/wiki/Enshittification | Also known as platform decay; is a pattern in which online products and services decline in quality. ↩︎

-

Prior to digital attention brokers like search engines, of course. We’re getting there. ↩︎

-

Not exclusively, of course. But all things being equal, volume usually leads to more favorable treatment by attention brokers. ↩︎

-

Recipe blogs are a delicious example. Virtually every food blog bloats itself with chummy narrative, crosslinks, and ads before letting you see actionable material. This behavior is literally taught as necessary in paid “how to be a food blogger” courses because it maximizes SEO. Getting straight to the point is now the mark of an amateur. ↩︎